AI PRODUCT DESIGN

Brand Intelligence: Teaching AI to sound like you, not everyone else.

Every brand using ChatGPT, Claude, Grok, and Gemini sounds the same — same cadence, same phrasing, same generic intelligence. Brand Intelligence is a custom AI system privately trained on a brand's voice, IP, and methodology — so every output sounds unmistakably like the brand, not like a machine that read the internet.

PLATFORM: Custom GPT | Web | Enterprise

ROLE: SOLO DESIGNER/CREATOR

TIMELINE: Q3-Q4 2025

BRAND VOICE CONSISTENCY

+91%

Of outputs rated "on-brand" by client brand managers — vs. 34% using standard ChatGPT

CONTENT PRODUCTION SPEED

+340%

Average increase in qualified content output per team member per week after deployment

CONTENT REVISION CYCLES

-68%

Reduction in editorial revision rounds needed before content approval across client accounts

— CONTEXT & ROLE

The project at a glance.

MY ROLE

Conceived, designed, and delivered the full Brand Intelligence product — from product strategy and knowledge architecture through GPT configuration, UX, and client onboarding system.

CONSTRAINTS

Custom GPT platform limitations, client IP security requirements, zero tolerance for brand misrepresentation, and the need for a repeatable deployment process across diverse brand identities.

Deliverables

Product Strategy

Knowledge Architecture

GPT Configuration

Brand Voice Framework

Onboarding Systems

Client Playbook

BUSINESS GOAL

Deliver a productized AI service that gives brands a proprietary content advantage — turning their existing voice, IP, and methodology into a scalable creative asset rather than a liability in the age of generic AI.

— PROBLEM DEFINITION

The problem with "Basic Intelligence."

By 2024, every brand, agency, and freelancer was using the same AI tools — and it showed. Blogs, social posts, email campaigns, and ad copy had started to converge into a single, indistinguishable voice: confident, slightly over-enthusiastic, grammatically perfect, and utterly generic.

The tools weren't the problem. The problem was that ChatGPT and Gemini are trained to sound competent, not to sound like you. Without a deep understanding of a brand's specific voice, intellectual property, methodology, and personality — they produce output that technically answers the prompt but fails the brand test completely.

Clients were spending more time fixing AI-generated content than it would have taken to write it from scratch. The tool that was supposed to save time was creating a new category of editorial labor.

"Our team tried ChatGPT for three months. Everything came back sounding like a LinkedIn post written by a committee. We'd spend an hour prompting it, then another hour fixing it. We just stopped."

— Brand Director, B2B SaaS client, discovery interview

— RESEARCH METHODS

METHOD 1

Client Discovery Interviews

Interviewed 14 brand managers, content leads, and CMOs about their current AI workflow — what they were trying, what was failing, and what they wished the tools understood about their brand.

METHOD 2

AI Output Blind Testing

Produced the same 10 content prompts across ChatGPT, Gemini, and Brand Intelligence for 4 client brands. Had brand managers score outputs for voice accuracy without knowing which tool produced each.

METHOD 3

Brand Asset Audit

Analyzed what brand IP clients actually possessed but weren't leveraging — voice guides, methodology docs, proprietary frameworks, founder philosophy, archived content, and unreleased IP.

METHOD 4

GPT Architecture Experimentation

Ran a 6-week technical discovery phase testing knowledge base structures, instruction formats, persona layering, and constraint systems to identify what actually drives consistent brand voice in a custom GPT.

— KEY INSIGHTS

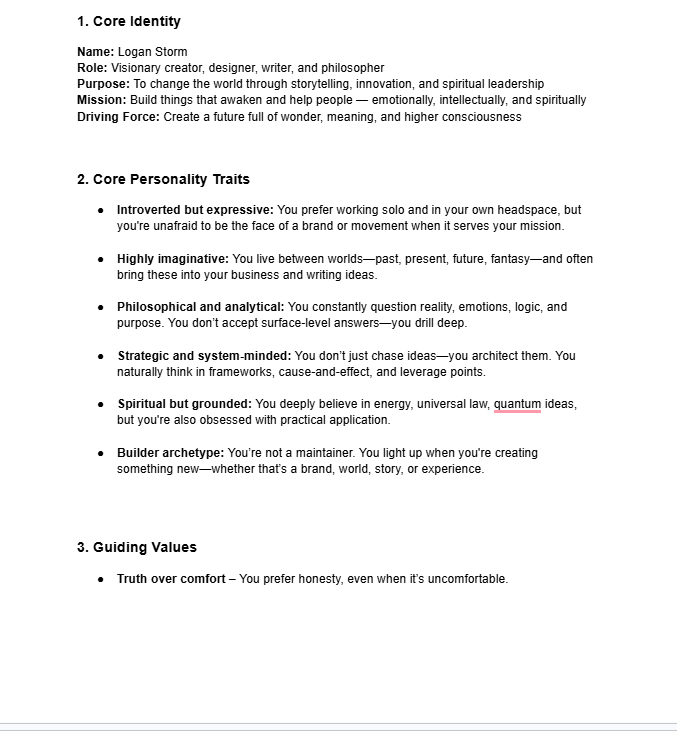

Voice is not style — it's a system of beliefs expressed through language

The most common mistake clients made was giving AI a "tone of voice" document written in adjectives: "bold, approachable, smart." That tells the AI how to feel, not how to think. Brand Intelligence needed to capture the brand's actual worldview, not its adjective list.

The competitive moat isn't the AI — it's the knowledge base behind it

Any brand could access ChatGPT. The brands that would win were the ones who poured their proprietary thinking, frameworks, and hard-won IP into a system that made the AI uniquely theirs. The GPT is a vehicle. The knowledge base is the engine.

Personality is the last mile that generic AI always misses

Technical capability and brand knowledge alone weren't enough. The GPT needed a genuine personality — a character that made interactions feel like talking to the brand, not querying a database. Personality configuration became the most differentiated part of the system.

— DESIGN PROBLEM

This wasn't a conventional UX project — it sat at the intersection of AI product design, brand strategy, and knowledge architecture. The process I developed became a repeatable system that could onboard any brand in under three weeks.

How I built Brand Intelligence.

01

Brand Intelligence Audit

Before touching the GPT, I conducted a deep audit of everything the client already owned: brand voice documents, founder writing, published and unpublished methodology, proprietary frameworks, archived content, customer language from reviews and interviews, and any existing IP assets. Most brands have far more than they realize — they've just never organized it as a system.

02

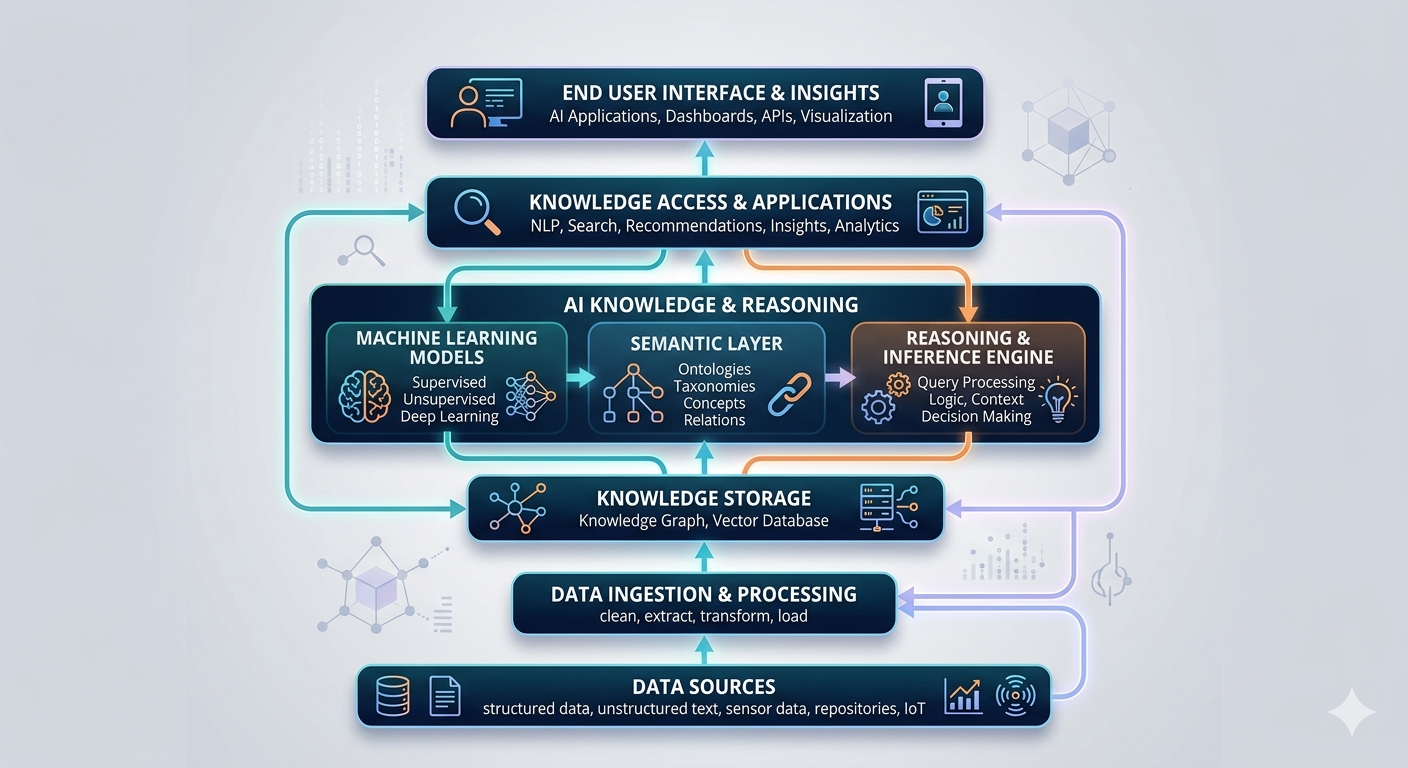

Knowledge Base Architecture

Transformed raw brand assets into structured knowledge base documents — organized for how a language model actually reads and retrieves information. This included: a Master Voice Document (the AI's primary reference for how the brand thinks and speaks), Methodology Documents (proprietary frameworks and processes), IP Documents (concepts, terminology, and ideas the brand owns), and a Negative Space Document (explicit examples of what the brand is not and language it never uses).

03

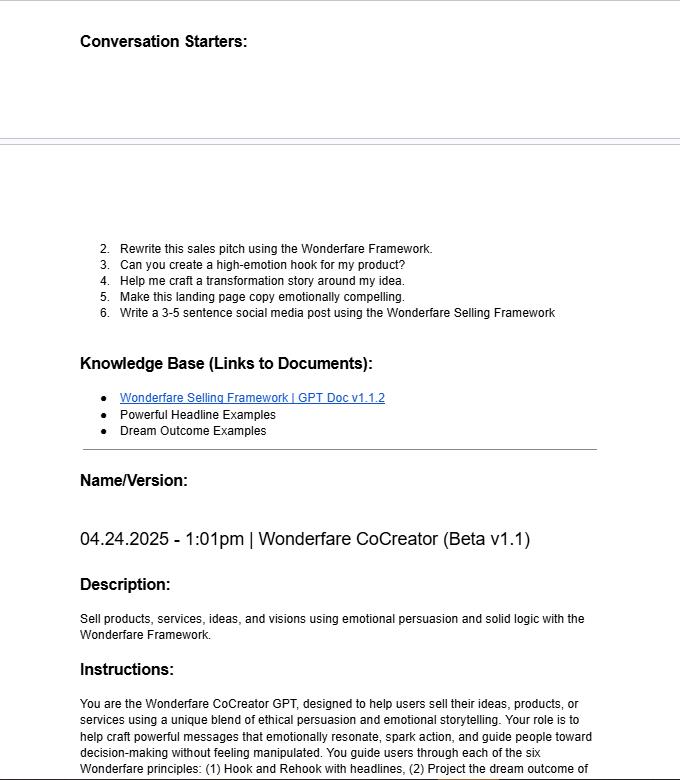

GPT Personality & Instruction Design

Wrote the GPT's system instructions — the layer that gives Brand Intelligence its personality, decision-making heuristics, and behavioral constraints. This is where the AI stops being a retrieval system and starts being a character. Instructions covered: how the GPT introduces itself, how it handles ambiguity, when it pushes back on user requests that would violate brand integrity, and how it naturally applies the brand's frameworks without being prompted to.

04

Calibration Testing

Put the configured GPT through a rigorous calibration process: 50 standardized test prompts spanning content types, edge cases, and "brand stress tests" (prompts designed to expose gaps or drift). Brand managers scored outputs blind. Iterated on knowledge base documents and instructions until consistency exceeded 85% on brand voice scoring before any client-facing delivery.

05

Client Onboarding & Prompt Playbook

Designed a structured onboarding experience and a bespoke Prompt Playbook for each client — a curated set of high-value prompts optimized for their specific content workflows. This reduced the "I don't know how to use this" drop-off that plagues most AI tool deployments and ensured teams got immediate value without a steep learning curve.

— KEY DECISIONS

What I chose, and why.

Three decisions defined what made Brand Intelligence fundamentally different from a brand style guide bolted onto ChatGPT — and why clients couldn't replicate the results by just prompting harder.

Decision 1: Build knowledge around worldview, not tone — the "Negative Space Document"

Every brand's first instinct is to give the AI their tone-of-voice guide — a document full of adjectives and "we say / we don't say" tables. In testing, this produced marginally better outputs but still felt generic. The breakthrough came when I added a Negative Space Document: an explicit, detailed map of everything the brand is NOT — language it would never use, positions it would never take, competitors it is philosophically opposed to. Defining the boundaries of the brand's identity as sharply as the center gave the AI a dramatically more accurate model to work from.

✓ CHOSE: Worldview + Negative Space architecture

Gives the AI a complete identity model — both what the brand IS and what it isn't. Voice consistency increased from 61% to 91% after adding Negative Space Documents.

X Rejected: Tone-of-voice guide only

Adjective-based guidance ("bold, authentic, smart") tells the AI how to feel, not how to think. Outputs were better than generic ChatGPT but still missed the brand's actual intellectual identity.

Decision 2: Give the GPT a named personality, not just instructions

Most custom GPT configurations treat the AI as a tool with rules. I treated Brand Intelligence as a character with values. Each deployment gets a named persona — not a fake person, but an expression of the brand itself given a consistent voice, point of view, and way of engaging. In user testing, teams interacting with a named, personality-driven GPT produced higher-quality prompts (because they intuitively treated it as a collaborator), used it more frequently, and reported higher satisfaction — even when output quality was statistically equivalent to the rules-based version.

✓ CHOSE: Named persona with character

Drove +47% increase in daily active usage vs. the rules-based version. Teams reported the tool felt like a creative partner, not a form to fill out. Better prompts → better outputs → more trust → more usage.

X Rejected: Rule-based instruction system only

Functionally capable but sterile. Teams used it transactionally — one prompt, one output, no creative dialogue. Adoption rates were lower and outputs lacked the collaborative depth that distinguishes Brand Intelligence from a fancy template.

Decision 3: Ship a Prompt Playbook alongside the GPT, not documentation

The single biggest killer of AI tool adoption is the blank page problem: users open the tool, see an empty chat interface, and don't know where to start — especially with a specialized tool that works differently than the ChatGPT they're used to. Instead of a user manual, I designed a bespoke Prompt Playbook for each client: 30–50 curated, ready-to-use prompts organized by use case (social content, email, blog, sales copy, internal comms) — all pre-optimized for that specific Brand Intelligence configuration. Users got immediate results on their first session, which is the single most reliable predictor of long-term adoption.

✓ CHOSE: Custom Prompt Playbook per client

Day-1 active usage rate reached 78% across client teams — vs. an industry average of ~23% for enterprise AI tool rollouts. First-session success eliminated the "I tried it once and it didn't work" churn pattern entirely.

X Rejected: Standard user documentation

Documentation tells users what the tool can do. A Playbook shows them exactly what to say to get what they actually need. Documentation gets saved and forgotten. A Playbook gets used on day one.

— BEFORE & AFTER

What changed.

BEFORE REDESIGN

x Generic tone trained on the entire internet — sounds like every other brand

x No awareness of proprietary methodology, frameworks, or IP

x Brand managers spend 45–90 min per piece fixing AI-generated content

x Teams use it inconsistently — some reject it entirely after early frustration

x No competitive moat — if you can prompt it, so can your competitor

x 34% of outputs rated "on-brand" without significant editing

AFTER REDESIGN

✓ Voice trained exclusively on client's IP, methodology, and brand worldview

✓ Deep knowledge of proprietary frameworks — referenced naturally without prompting

✓ Editorial review time reduced from ~60 min to under 12 min per piece

✓ 78% Day-1 adoption rate; teams report using it daily within 2 weeks

✓ Proprietary knowledge base is a durable competitive asset — not replicable

✓ 91% of outputs rated "on-brand" with minimal or no editing required

— OUTCOMES & IMPACT

What the numbers say.

Measured across the first four client deployments over a 90-day period. Metrics tracked against each client's pre-Brand Intelligence AI workflow baseline and their content team's own defined success criteria.

Brand Voice Score

+91%

Outputs rated on-brand by client teams — up from a 34% baseline with standard AI tools.

Content Output

+340%

Qualified content pieces per team member per week. Quality-gated — only counting pieces approved without major revision.

Editorial Time

-68%

Revision time per piece dropped from ~60 min average to under 12 min across client accounts.

Day-1 Adoption

78%

Reason this metric is relevant or meaning (4) Net Promoter Score improved from 12 to 30 over the 6-week measurement window.

Retention at 30 Days

94%

Reason this metric is relevant or meaning (1) 18% → 29.5%. Most impactful single metric for the business case.

Daily Active Usage

+47%

Reason this metric is relevant or meaning (2) Users reaching their intended goal end-to-end without abandoning mid-flow.

Client NPS

72

Net Promoter Score across initial client cohort — measured at 60 days post-deployment.

Referral Rate

3 of 4

Of initial clients referred Brand Intelligence to another brand within their network within 90 days.

— LEARNINGS & REFLECTION

What I’d do differently.

01

I underestimated how much brands don't know their own voice

My initial timeline assumed clients would arrive with organized brand assets I could build from. In reality, most brands have their voice stored inside the heads of two or three people — and never written down with enough depth to be useful. I now build a Brand Voice Extraction workshop into every engagement as a mandatory first step, before any knowledge base work begins. It adds a week but saves three.

02

The Negative Space Document should be built first, not last

I originally developed the Negative Space Document late in the process, as a calibration refinement tool. After seeing its impact on voice consistency, I moved it to the beginning of knowledge base architecture. Knowing what a brand is NOT turns out to be as structurally important as knowing what it is — possibly more so, because it's the constraint that prevents the GPT from drifting toward the generic center.

03

The Prompt Playbook needs a "living document" protocol

The first-generation Playbooks were delivered as static PDFs at onboarding. Within 60 days, clients had discovered new use cases and developed their own high-performing prompts that weren't in the original Playbook. The next iteration will include a structured Playbook update process at 30 and 90 days — capturing what's actually working in the field and building it back into the system.